- Blog

- Linuzappz Iso Cdvd Driver 0.5.0 Download

- Medion Tv Dvb-t Combo Card Ctx953 Treiber

- My Disney Kitchen Free Download Mac

- Hadoop Installation On Windows Guide

- Abeldent Dental Software Free Download

- Ericsson Axe10 Manual

- Acer C110 Projector Drivers

- Voxengo Glisseq Serial

- Tamil Shorthand Book Free

- Samehadaku Naruto Shippuden Eps 400

- Contoh Format Undangan Pernikahan

- 40 Days Prayer For The Faithful Departed Pdf Editor

- Kaki King Until We Felt Red Rar Software Zip

- Pokemon Fire Red Rom .ips Patch

- Скачать Ps3 Emulator For Pc

- Lana Del Rey Unreleased Download Free

- Sap Crystal Reports 2011 Sp2 Keygenguru

- Download Lagu Nasyid Terbaru Unicron

- Hp Compaq 6510b Drivers Windows Xp Download

- Free Download Kumpulan Lagu Batak Terpopuler

- Zoombinis Mountain Rescue Free Download Mac

- Bs 1377 Part 3 1990 Pdf

- Descarca Programe De Descarcat Jocuri Gratis

- London 2012 Olympic Games Pc Crack Only

- Mersenne Twister Crack

- Shiv Bhakti Telugu Mp3 Songs Free Download

- Coolio Gangsta`s Paradise Zip

- Download Game Sim City 4 Pc Rip

- Guitar Hero - Aerosmith.wbfs

- Ps2 Cowboy Bebop Iso

- FalconFours Ultimate Boot CD/USB V4 6 (F4UBCD)

- Disney Magico Artista 3 Crack Download

- Tavannes Pocket Watch Serial Numbers

- Sennheiser Ew 100 G2 Manual

- Watch roast of james franco free stream

- Tekken 7 dlc characters

- Om shivoham song download

- Windows home server 2011 system builder

- Open adobe reader in google chrome

- Fifty shades of grey nude scenes

- Camp rock 1 videos

- Colonoscopy prep timetable

- All avalibal guns in scribble nauts unlimited

- The spongebob squarepants movie video game pc download

- Navicat for mysql 11-2-6 seriel key

- Bypass lg tool

- Asme y14-5 senior certifcation

- Title bout championship boxing playstore

- Rome total war 2 patch

Installing Hadoop This is a detailed step-by-step guide for installing Hadoop on Windows, Linux or MAC. It’s based in Hadoop 1.0.0, which is the current and first official stable version. It’s based in version 0.20.0 (note that there was a 0.21.0 version). Installing Hadoop on Linux / MAC is pretty straight forward. However, having it run on Windows can be a bit tricky.

You’d probably not run Hadoop on Windows on a productive environment, but it may result convenient as a development environment. If you are using Linux/MAC, just skip Windows information. Windows installation Hadoop can be installed on Windows using Cygwin (not inteded for production environments), but there are several Cygwin installation and configuration issues.

Jun 24, 2018 - HADOOP 2.8.0 (22 March, 2017) INSTALLATION ON WINDOW 10. Installed on your system then first install java under 'C: JAVA' Java setup.

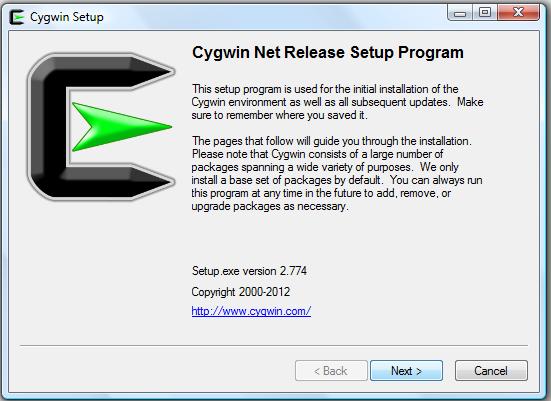

Windows: Download and install Cygwin Cygwin is an implementation of a set of Linux commands and applications for Windows. Download the web installer from: and run it.

Installer will request some information before installing:. Installation method. Select “Install from Internet”. Root Directory. The default is c:cygwin. Accept this directory.

Local Package Directory (the directory where install files will be downloaded). The default is c:cygwin-packages. Accept this directory.

Connection and download site. A list of available packages will be displayed. The following packages are missing, so make sure to include them:. openssh.

openssl. tcpwrappers. diffutils If several options are listed (eg: openssl) include them all. Upon installation completion, it will create a Cygwin icon in the Desktop and/or Start menu. Click it to open a Cygwin window. Configuring SSH on Windows Hadoop requires SSH (Secure SHell) to be running. To configure it, open a Cygwin window and type: ssh-host-config Use the following installation options:.

Should privilege separation be used? (yes/no) no. Do you want to install sshd as a service? Yes. Enter the value of CYGWIN for the daemon: ntsec. If requested for an account name, specify: cygserver with a password you’ll remember. Eg: $ ssh-host-config.

Info: Generating /etc/sshhostkey. Info: Generating /etc/sshhostrsakey. Info: Generating /etc/sshhostdsakey.

Info: Generating /etc/sshhostecdsakey. Info: Creating default /etc/sshconfig file. Info: Creating default /etc/sshdconfig file. Info: Privilege separation is set to yes by default since OpenSSH 3.3.

Info: However, this requires a non-privileged account called 'sshd'. Info: For more info on privilege separation read /usr/share/doc/openssh/README.privsep. Query: Should privilege separation be used? (yes/no) no. Info: Updating /etc/sshdconfig file. Query: Do you want to install sshd as a service?. Query: (Say 'no' if it is already installed as a service) (yes/no) yes.

Query: Enter the value of CYGWIN for the daemon: ntsec. Info: On Windows Server 2003, Windows Vista, and above, the. Info: SYSTEM account cannot setuid to other users - a capability. Info: sshd requires. You need to have or to create a privileged.

Info: account. This script will help you do so. Info: You appear to be running Windows XP 64bit, Windows 2003 Server,.

Info: or later. On these systems, it's not possible to use the LocalSystem.

Info: account for services that can change the user id without an. Info: explicit password (such as passwordless logins e.g. Public key.

Info: authentication via sshd). Info: If you want to enable that functionality, it's required to create. Info: a new account with special privileges (unless a similar account. Info: already exists). This account is then used to run these special. Info: servers. Info: Note that creating a new user requires that the current account.

Info: have Administrator privileges itself. Info: No privileged account could be found. Info: This script plans to use 'cygserver'. Info: 'cygserver' will only be used by registered services. Query: Do you want to use a different name? (yes/no) no. Query: Create new privileged user account 'cygserver'?

(yes/no) yes. Info: Please enter a password for new user cygserver. Please be sure.

Info: that this password matches the password rules given on your system. Info: Entering no password will exit the configuration. Query: Please enter the password:. Query: Reenter: Enter password. Info: User 'cygserver' has been created with password ' ####'.

Info: If you change the password, please remember also to change the. Info: password for the installed services which use (or will soon use). Info: the 'cygserver' account. Info: Also keep in mind that the user 'cygserver' needs read permissions.

Info: on all users' relevant files for the services running as 'cygserver3'. Info: In particular, for the sshd server all users'.ssh/authorizedkeys. Info: files must have appropriate permissions to allow public key. Info: authentication. (Re-)running ssh-user-config for each user will set.

Info: these permissions correctly. Similar restrictions apply, for. Info: instance, for.rhosts files if the rshd server is running, etc. Info: The sshd service has been installed under the 'cygserver'.

Info: account. To start the service now, call `net start sshd' or. Info: `cygrunsrv -S sshd'. Otherwise, it will start automatically. Info: after the next reboot.

Info: Host configuration finished. Installation script creates:. configuration files:. /etc/sshconfig. /etc/sshhostdsakey. /etc/sshhostecdsakey.

/etc/sshhostkey. /etc/sshhostrsakey. /etc/sshdconfig. a cygserver privilleged account. a sshd Windows service, using the specified account and password, and listed under the name CYGWIN sshd. Under Windows, specify paths using full format.

Eg: dfs.name.dir file:///c:/hdfs/name Start hadoop Format NameNode Before starting Hadoop, you have to format the Name node. This is the node containing file structure. To format the Name node run: cd /usr/local/hadoop-1.0.0./bin/hadoop namenode -format Several files will be created under the directory defined for the configuration key dfs.name.dir.

Start HDFS bin/start-dfs.sh Check HDFS is running by browsing to:. A webpage should be displayed with DFS information, where you can view and browse the directory structure. If you run into any issue, check log files under hadoop-1.0.0/logs/ for errors. You can also browse the file system using bin/hadoop fs -ls. Type bin/hadoop fs for the complete set of commands.

Hadoop is supported by GNU/Linux platform and its flavors. Therefore, we have to install a Linux operating system for setting up Hadoop environment. In case you have an OS other than Linux, you can install a Virtualbox software in it and have Linux inside the Virtualbox. Pre-installation Setup Before installing Hadoop into the Linux environment, we need to set up Linux using ssh (Secure Shell).

Follow the steps given below for setting up the Linux environment. Creating a User At the beginning, it is recommended to create a separate user for Hadoop to isolate Hadoop file system from Unix file system. Follow the steps given below to create a user −. Open the root using the command “su”. Create a user from the root account using the command “useradd username”. Now you can open an existing user account using the command “su username”. Open the Linux terminal and type the following commands to create a user.

$ su password: # useradd hadoop # passwd hadoop New passwd: Retype new passwd SSH Setup and Key Generation SSH setup is required to do different operations on a cluster such as starting, stopping, distributed daemon shell operations. To authenticate different users of Hadoop, it is required to provide public/private key pair for a Hadoop user and share it with different users. The following commands are used for generating a key value pair using SSH. Copy the public keys form idrsa.pub to authorizedkeys, and provide the owner with read and write permissions to authorizedkeys file respectively.

$ ssh-keygen -t rsa $ cat /.ssh/idrsa.pub /.ssh/authorizedkeys $ chmod 0600 /.ssh/authorizedkeys Installing Java Java is the main prerequisite for Hadoop. First of all, you should verify the existence of java in your system using the command “java -version”. The syntax of java version command is given below. $ java -version If everything is in order, it will give you the following output. Java version '1.7.071' Java(TM) SE Runtime Environment (build 1.7.071-b13) Java HotSpot(TM) Client VM (build 25.0-b02, mixed mode) If java is not installed in your system, then follow the steps given below for installing java. Step 1 Download java (JDK - X64.tar.gz) by visiting the following link Then jdk-7u71-linux-x64.tar.gz will be downloaded into your system. Step 2 Generally you will find the downloaded java file in Downloads folder.

Verify it and extract the jdk-7u71-linux-x64.gz file using the following commands. $ cd Downloads/ $ ls jdk-7u71-linux-x64.gz $ tar zxf jdk-7u71-linux-x64.gz $ ls jdk1.7.071 jdk-7u71-linux-x64.gz Step 3 To make java available to all the users, you have to move it to the location “/usr/local/”. Open root, and type the following commands.

$ su password: # mv jdk1.7.071 /usr/local/ # exit Step 4 For setting up PATH and JAVAHOME variables, add the following commands to /.bashrc file. Export JAVAHOME=/usr/local/jdk1.7.071 export PATH=$PATH:$JAVAHOME/bin Now apply all the changes into the current running system. $ source /.bashrc Step 5 Use the following commands to configure java alternatives − # alternatives -install /usr/bin/java java usr/local/java/bin/java 2 # alternatives -install /usr/bin/javac javac usr/local/java/bin/javac 2 # alternatives -install /usr/bin/jar jar usr/local/java/bin/jar 2 # alternatives -set java usr/local/java/bin/java # alternatives -set javac usr/local/java/bin/javac # alternatives -set jar usr/local/java/bin/jar Now verify the java -version command from the terminal as explained above. Downloading Hadoop Download and extract Hadoop 2.4.1 from Apache software foundation using the following commands. $ su password: # cd /usr/local # wget hadoop-2.4.1.tar.gz # tar xzf hadoop-2.4.1.tar.gz # mv hadoop-2.4.1/. to hadoop/ # exit Hadoop Operation Modes Once you have downloaded Hadoop, you can operate your Hadoop cluster in one of the three supported modes −. Local/Standalone Mode − After downloading Hadoop in your system, by default, it is configured in a standalone mode and can be run as a single java process.

Pseudo Distributed Mode − It is a distributed simulation on single machine. Each Hadoop daemon such as hdfs, yarn, MapReduce etc., will run as a separate java process. This mode is useful for development. Fully Distributed Mode − This mode is fully distributed with minimum two or more machines as a cluster.

We will come across this mode in detail in the coming chapters. Installing Hadoop in Standalone Mode Here we will discuss the installation of Hadoop 2.4.1 in standalone mode. There are no daemons running and everything runs in a single JVM. Standalone mode is suitable for running MapReduce programs during development, since it is easy to test and debug them.

Setting Up Hadoop You can set Hadoop environment variables by appending the following commands to /.bashrc file. Export HADOOPHOME=/usr/local/hadoop Before proceeding further, you need to make sure that Hadoop is working fine.

Just issue the following command − $ hadoop version If everything is fine with your setup, then you should see the following result − Hadoop 2.4.1 Subversion -r 1529768 Compiled by hortonmu on 2013-10-07T06:28Z Compiled with protoc 2.5.0 From source with checksum 79e53ce7994d1628b240f09af91e1af4 It means your Hadoop's standalone mode setup is working fine. By default, Hadoop is configured to run in a non-distributed mode on a single machine. Example Let's check a simple example of Hadoop. Hadoop installation delivers the following example MapReduce jar file, which provides basic functionality of MapReduce and can be used for calculating, like Pi value, word counts in a given list of files, etc. $HADOOPHOME/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.2.0.jar Let's have an input directory where we will push a few files and our requirement is to count the total number of words in those files.

To calculate the total number of words, we do not need to write our MapReduce, provided the.jar file contains the implementation for word count. You can try other examples using the same.jar file; just issue the following commands to check supported MapReduce functional programs by hadoop-mapreduce-examples-2.2.0.jar file. $ hadoop jar $HADOOPHOME/share/hadoop/mapreduce/hadoop-mapreduceexamples-2.2.0.jar Step 1 Create temporary content files in the input directory. You can create this input directory anywhere you would like to work. $ mkdir input $ cp $HADOOPHOME/.txt input $ ls -l input It will give the following files in your input directory − total 24 -rw-r-r- 1 root root 15164 Feb 21 10:14 LICENSE.txt -rw-r-r- 1 root root 101 Feb 21 10:14 NOTICE.txt -rw-r-r- 1 root root 1366 Feb 21 10:14 README.txt These files have been copied from the Hadoop installation home directory. For your experiment, you can have different and large sets of files.

Step 2 Let's start the Hadoop process to count the total number of words in all the files available in the input directory, as follows − $ hadoop jar $HADOOPHOME/share/hadoop/mapreduce/hadoop-mapreduceexamples-2.2.0.jar wordcount input output Step 3 Step-2 will do the required processing and save the output in output/part-r00000 file, which you can check by using − $cat output/. It will list down all the words along with their total counts available in all the files available in the input directory. 'AS 4 'Contribution' 1 'Contributor' 1 'Derivative 1 'Legal 1 'License' 1 'License'); 1 'Licensor' 1 'NOTICE” 1 'Not 1 'Object' 1 'Source” 1 'Work” 1 'You' 1 'Your') 1 ' 1 'control' 1 'printed 1 'submitted' 1 (50%) 1 (BIS), 1 (C) 1 (Don't) 1 (ECCN) 1 (INCLUDING 2 (INCLUDING, 2. Installing Hadoop in Pseudo Distributed Mode Follow the steps given below to install Hadoop 2.4.1 in pseudo distributed mode. Step 1 − Setting Up Hadoop You can set Hadoop environment variables by appending the following commands to /.bashrc file. Export HADOOPHOME=/usr/local/hadoop export HADOOPMAPREDHOME=$HADOOPHOME export HADOOPCOMMONHOME=$HADOOPHOME export HADOOPHDFSHOME=$HADOOPHOME export YARNHOME=$HADOOPHOME export HADOOPCOMMONLIBNATIVEDIR=$HADOOPHOME/lib/native export PATH=$PATH:$HADOOPHOME/sbin:$HADOOPHOME/bin export HADOOPINSTALL=$HADOOPHOME Now apply all the changes into the current running system.

$ source /.bashrc Step 2 − Hadoop Configuration You can find all the Hadoop configuration files in the location “$HADOOPHOME/etc/hadoop”. It is required to make changes in those configuration files according to your Hadoop infrastructure.

$ cd $HADOOPHOME/etc/hadoop In order to develop Hadoop programs in java, you have to reset the java environment variables in hadoop-env.sh file by replacing JAVAHOME value with the location of java in your system. Export JAVAHOME=/usr/local/jdk1.7.071 The following are the list of files that you have to edit to configure Hadoop. Core-site.xml The core-site.xml file contains information such as the port number used for Hadoop instance, memory allocated for the file system, memory limit for storing the data, and size of Read/Write buffers. Open the core-site.xml and add the following properties in between, tags. Fs.default.name hdfs://localhost:9000 hdfs-site.xml The hdfs-site.xml file contains information such as the value of replication data, namenode path, and datanode paths of your local file systems. It means the place where you want to store the Hadoop infrastructure. Let us assume the following data.

Dfs.replication (data replication value) = 1 (In the below given path /hadoop/ is the user name. Hadoopinfra/hdfs/namenode is the directory created by hdfs file system.) namenode path = //home/hadoop/hadoopinfra/hdfs/namenode (hadoopinfra/hdfs/datanode is the directory created by hdfs file system.) datanode path = //home/hadoop/hadoopinfra/hdfs/datanode Open this file and add the following properties in between the tags in this file.

Dfs.replication 1 dfs.name.dir file:///home/hadoop/hadoopinfra/hdfs/namenode dfs.data.dir file:///home/hadoop/hadoopinfra/hdfs/datanode Note − In the above file, all the property values are user-defined and you can make changes according to your Hadoop infrastructure. Yarn-site.xml This file is used to configure yarn into Hadoop.

Open the yarn-site.xml file and add the following properties in between the, tags in this file. Yarn.nodemanager.aux-services mapreduceshuffle mapred-site.xml This file is used to specify which MapReduce framework we are using.

By default, Hadoop contains a template of yarn-site.xml. First of all, it is required to copy the file from mapred-site.xml.template to mapred-site.xml file using the following command. $ cp mapred-site.xml.template mapred-site.xml Open mapred-site.xml file and add the following properties in between the, tags in this file. Mapreduce.framework.name yarn Verifying Hadoop Installation The following steps are used to verify the Hadoop installation. Step 1 − Name Node Setup Set up the namenode using the command “hdfs namenode -format” as follows. $ cd $ hdfs namenode -format The expected result is as follows.

10/24/14 21:30:55 INFO namenode.NameNode: STARTUPMSG: /. STARTUPMSG: Starting NameNode STARTUPMSG: host = localhost/192.168.1.11 STARTUPMSG: args = -format STARTUPMSG: version = 2.4.1. 10/24/14 21:30:56 INFO common.Storage: Storage directory /home/hadoop/hadoopinfra/hdfs/namenode has been successfully formatted. 10/24/14 21:30:56 INFO namenode.NNStorageRetentionManager: Going to retain 1 images with txid = 0 10/24/14 21:30:56 INFO util.ExitUtil: Exiting with status 0 10/24/14 21:30:56 INFO namenode.NameNode: SHUTDOWNMSG: /.

SHUTDOWNMSG: Shutting down NameNode at localhost/192.168.1.11./ Step 2 − Verifying Hadoop dfs The following command is used to start dfs. Executing this command will start your Hadoop file system. $ start-dfs.sh The expected output is as follows − 10/24/14 21:37:56 Starting namenodes on localhost localhost: starting namenode, logging to /home/hadoop/hadoop 2.4.1/logs/hadoop-hadoop-namenode-localhost.out localhost: starting datanode, logging to /home/hadoop/hadoop 2.4.1/logs/hadoop-hadoop-datanode-localhost.out Starting secondary namenodes 0.0.0.0 Step 3 − Verifying Yarn Script The following command is used to start the yarn script.

Executing this command will start your yarn daemons. $ start-yarn.sh The expected output as follows − starting yarn daemons starting resourcemanager, logging to /home/hadoop/hadoop 2.4.1/logs/yarn-hadoop-resourcemanager-localhost.out localhost: starting nodemanager, logging to /home/hadoop/hadoop 2.4.1/logs/yarn-hadoop-nodemanager-localhost.out Step 4 − Accessing Hadoop on Browser The default port number to access Hadoop is 50070. Use the following url to get Hadoop services on browser.

Step 5 − Verify All Applications for Cluster The default port number to access all applications of cluster is 8088. Use the following url to visit this service.